permission java.io.FilePermission "<Remove rsa-md5 signing from jdk.jar.disabledAlgorithms in C:\Program Files (x86)\Java\jre1.8.0_241\lib\security\java.security>", "read, write, execute, delete"; permission java.net.SocketPermission "*", "accept, connect, listen, resolve"; permission java.util.PropertyPermission "*", "read, write"; permission java.lang.RuntimePermission "*"; permission java.awt.AWTPermission "showWindowWithoutWarningBanner";

EFI> drivers

T D

D Y C I

R P F A

V VERSION E G G #D #C DRIVER NAME IMAGE NAME

== ======== = = = == == =================================== ===================

...

98 00000354 B X X 1 1 Smart Array SAS Driver v3.54 MemoryMapped(0xB,0x

...

EFI> devices

C T D

T Y C I

R P F A

L E G G #P #D #C Device Name

== = = = == == == =============================================================

...

B4 B X X 1 2 1 Smart Array P410i Controller

...

EFI> drvcfg

....

98 B4 ...

EFI> drvcfg -s 98 B4

[Orca menu]

From here we can enable/disable raid and manage partitions. Once we are done managing the arrays we can reload the partitions into EFI with:

EFI> reconnect -r ... EFI> map -r ...Annoyingly I've found myself having to do this regularly as the installers all fail if there is anything but a fresh raid array.

The only other note on the Bladecenter is that it gets it's system time from the installed Operating System, and the way it calculates if your login session is timed out appears to be based on the difference between your local time and the time on the BladeCenter you logged in, thankfully it doesn't check this often but it does make it impossible to create a local account on the iLO without setting up an operating system with the correct time or adjusting your system time to 1970.

All of the installers use the serial output as opposed to VGA output, if you're looking at the VGA then they all appear to freeze after loading the ramdisk.

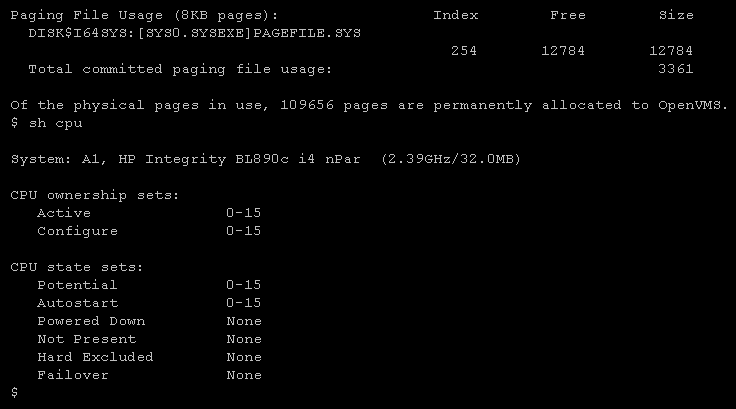

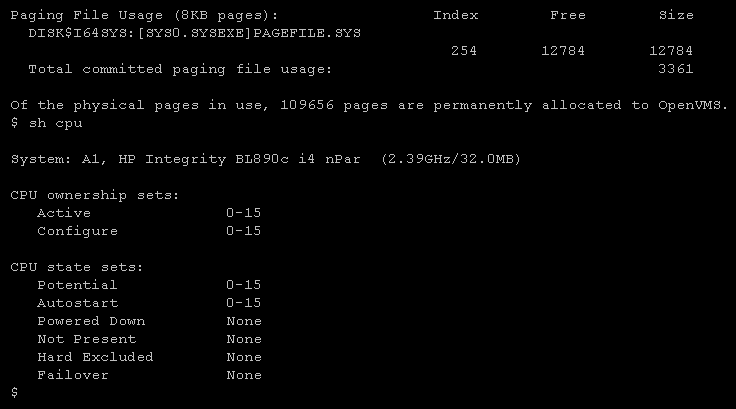

The OpenVMS installer works without issue.

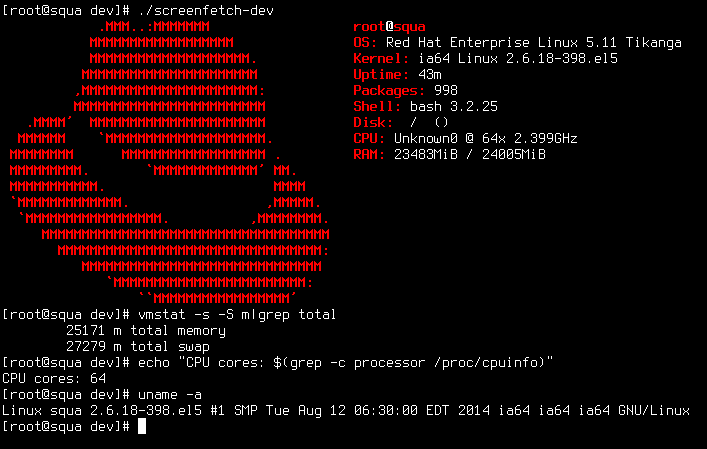

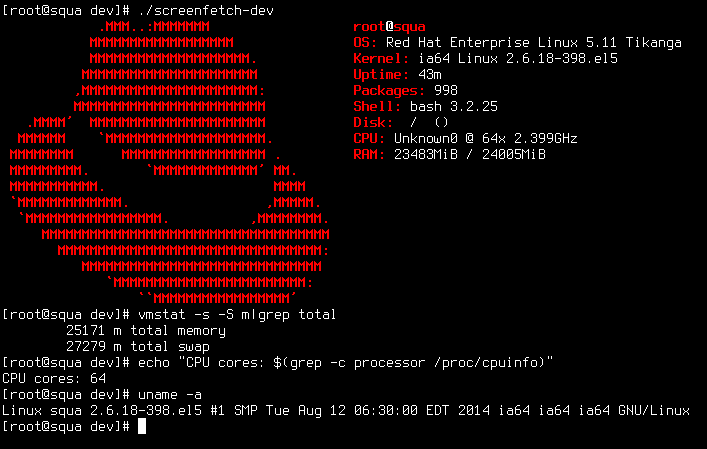

The RHEL 5.11 installer works without issue.

The SLES 11 Installer doesn't reach a menu, and instead gives unending DMAR and DRHD faults, it looks like this, when I reached out to SUSE support they would not support this without a LTSS subscription.

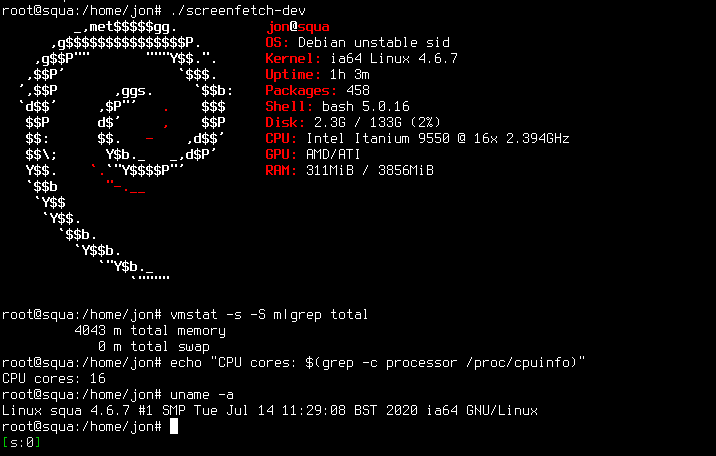

The Debian 10.0.0 installer does not work, there are many new ISOs to try but none of them install, I suspect the earlier ones fail due to missing drivers for the Virtual CDROM and the later fail due to incompatibility with kernels after version 4.6.7.

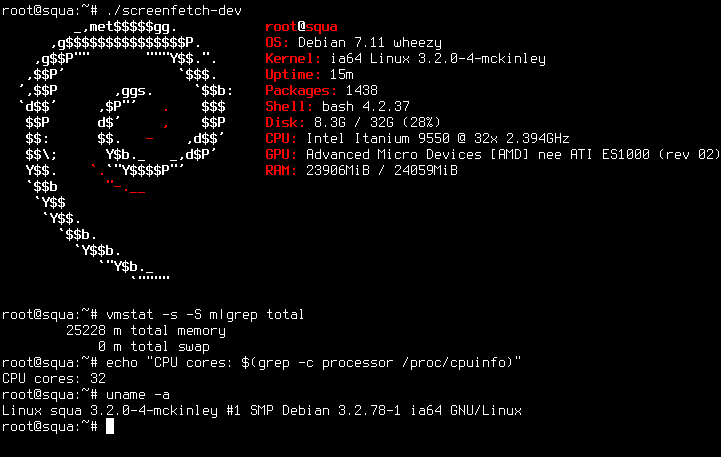

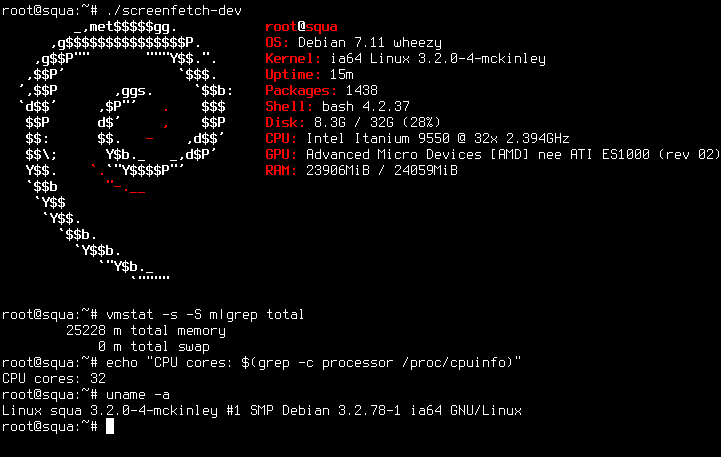

The Debian 7.11 installer has a bug in elilo.sh which stops the bootloader from installing with the message:

Running "/usr/sbin/elilo" failed with error code "1".This was reportedly fixed here but it appears to have snuck back in, this can be worked around in the shell after elilo fails:

# cd / # mount -o bind /proc /target/proc # mount -o bind /sys /target/sys # umount /target/boot/efi # chroot /target /bin/bash # cd /root # wget https://sources.gentoo.org/cgi-bin/viewvc.cgi/gentoo-src/elilo/ref/elilo.sh # sed -i 's/iso8859-1/utf8/' elilo.sh # chmod +x elilo.sh # ./elilo.sh -b /dev/sda1 # This points to the /boot partition # efibootmgr -c -L "Debian" -l 'EFI\debian\elilo.efi' # # Depending on the partition scheme root may need correcting to point to / in /boot/EFI/debian/elilo.conf # exit # exit # Exits back to installerPackages for 7.11 are still served by debian, just add the below to /etc/apt/sources.list

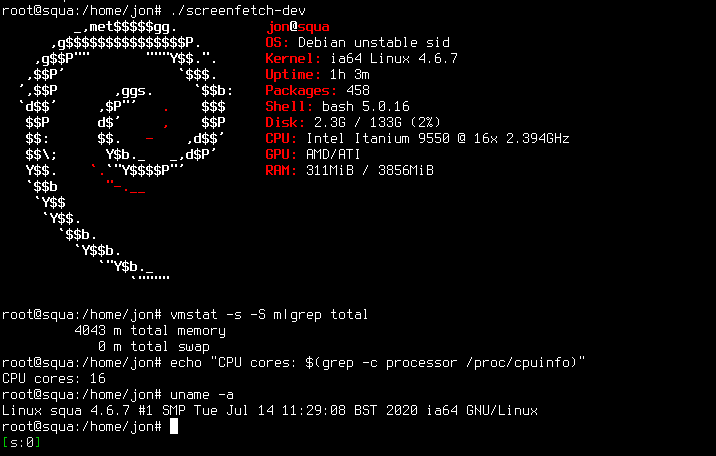

deb http://archive.debian.org/debian/ wheezy mainDebian7.11 -> Debian11 Upgrade

deb http://ftp.de.debian.org/debian-ports/ sid mainand running

apt-mark hold linux-image-4.6.7 linux-headers-4.6.7 linux-firmware-image-4.6.7 linux-libc-dev apt-get upgradethen dealing with the left behind packages manually. I did have to install ifupdown and add /usr/sbin and /sbin to the path (via /etc/profile) but otherwise it wasn't too bad.

It should be possible to upgrade the RHEL or Debian installs with a stage3 Gentoo install but I haven't yet managed it.

.text

.global main

.proc main

main: .prologue

alloc loc1 = ar.pfs,0,4,1,0

mov loc2 = gp

mov loc3 = rp

.body

add loc0 = @ltoff(msg),gp

;;

ld8 out0 = [loc0]

br.call.sptk rp = puts

mov gp = loc2

.restore sp

mov rp = loc3

mov ar.pfs = loc1

mov ret0 = 0

br.ret.sptk rp

.endp main

.data

msg: stringz "Hello world!"

[jon@squa devel]$ as -x hello.s -o hello.o [jon@squa devel]$ gcc hello.o [jon@squa devel]$ ./a.out Hello world!